By Mustafa Keskin, Corning Optical Communications

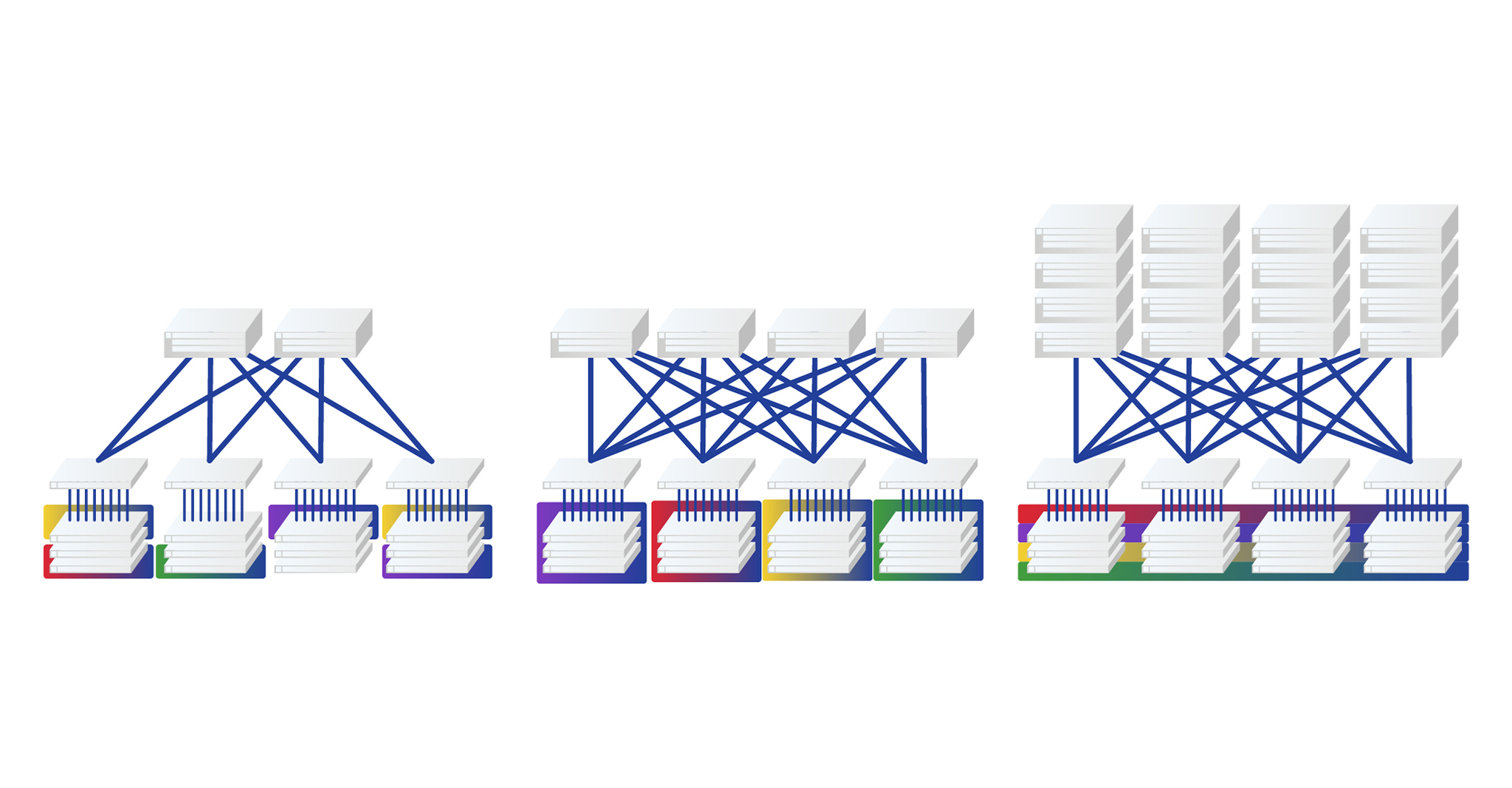

As the size of networks grew during the last decade, we saw a shift from classical 3-tier network architectures to a flatter and wider spine-and-leaf architecture. With its fully meshed connectivity approach, spine-and-leaf architecture provided us the predictable high-speed network performance we were craving and also the reliability within our network switch fabric.

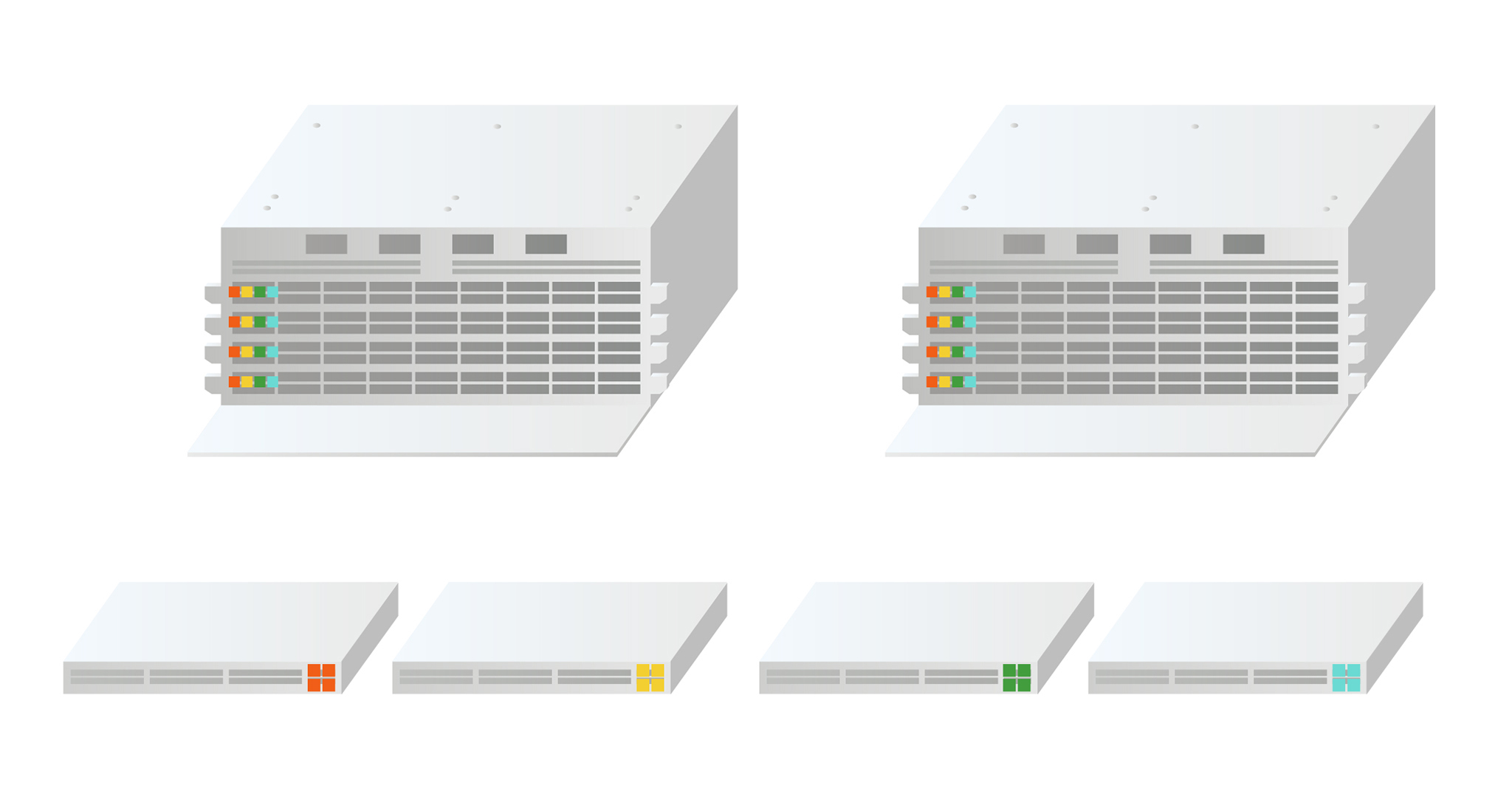

Along with its advantages, spine-and-leaf architecture presents challenges in terms of structured cabling. In this article we will examine how to build and scale a four-way spine and progress to larger spines (such as 16-way spine) and maintain wire-speed switching capability and redundancy as we grow. We will also explore the advantages and disadvantages of two approaches in building our structured cabling main distribution area; one approach uses classical fiber patch cables, and the other uses optical mesh modules.

Fundamental knowledge of Spine-and-Leaf Architecture is crucial as you begin to modernize your data center's infrastructure. Learn more about the basics and embracing this type of network design in our Spine-and-Leaf Architecture 101 article.